LLM research moves fast. Really fast. One week, a model struggles with basic reasoning. The next week, it’s writing code, passing exams, and doing things no one expected.

But keeping up with all of it? That’s where things get tricky. There’s so much noise out there. So many papers, so many claims, and so many “big announcements” that turn out to be nothing.

The good news is that a handful of real breakthroughs are actually changing the game right now. And they’re not just interesting on paper; they have real-world impact.

This blog breaks down the latest updates in LLM research that actually matter.

Major Breakthroughs in LLM Research

LLM research has hit some major milestones recently. These breakthroughs are reshaping how AI models think, reason, and perform.

1. Longer Context Windows: Models can now process much more text at once. This means a better understanding of long documents and conversations.

2. Improved Reasoning Abilities: LLMs are getting better at solving multi-step problems. They now handle logic and math with far greater accuracy.

3. Multimodal Capabilities: Models no longer just read text. They can now process images, audio, and video together in one go.

4. Faster Inference Speeds: New techniques are making models respond more quickly. This cuts down wait time without sacrificing output quality.

5. Better Alignment With Human Intent: Models are getting sharper at understanding what users actually want. Responses feel more on-point and less robotic.

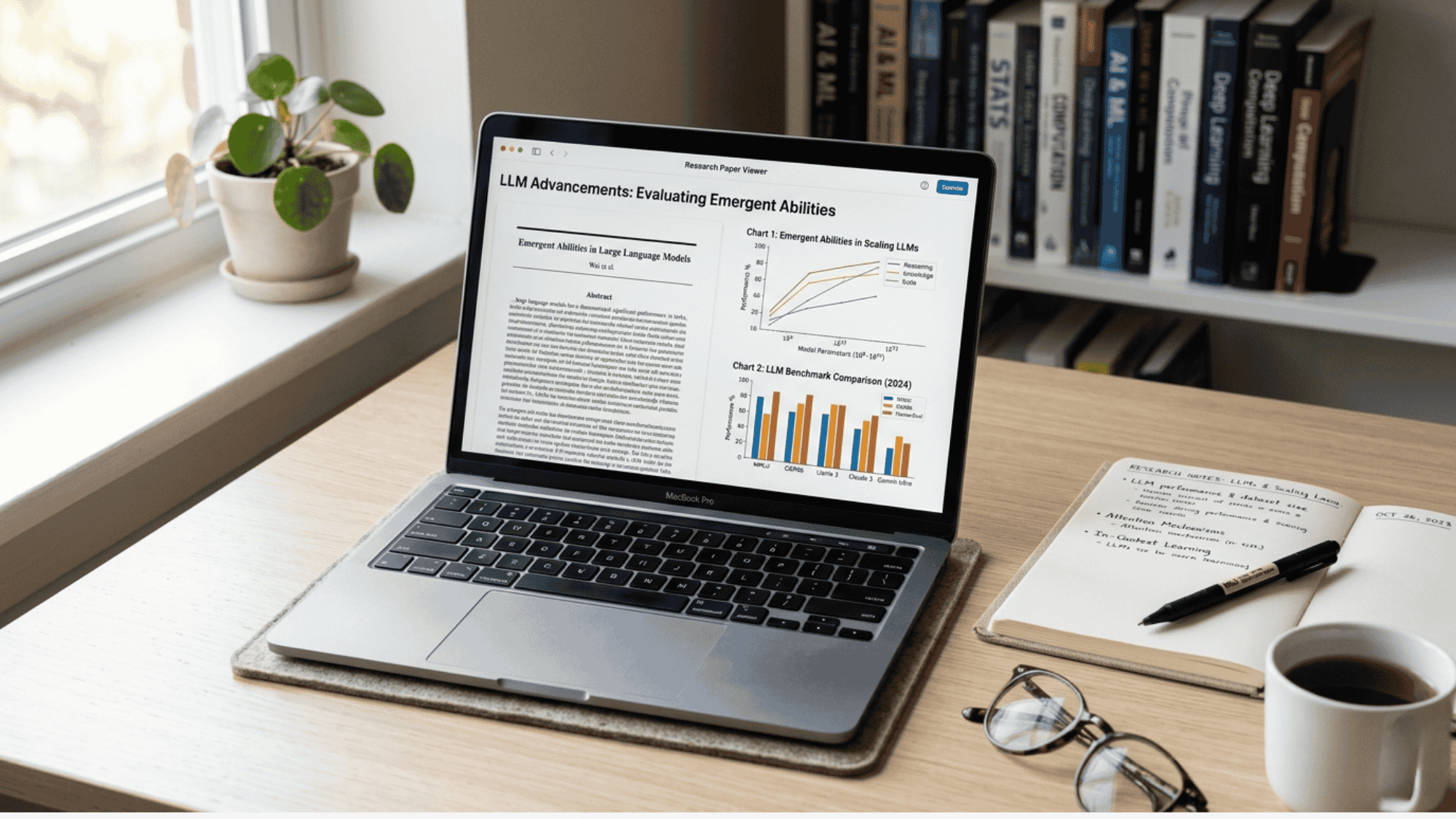

Recent Studies & Academic Research on LLMs

Academic research on LLMs is moving at a rapid pace. New studies are shedding light on how these models learn and improve.

1. Chain-of-Thought Prompting Studies

Researchers found that asking models to “think step by step” leads to much better answers. This method, known as chain-of-thought prompting, helps LLMs break down hard problems into smaller parts.

Studies show it significantly improves performance on reasoning and math tasks. It’s a simple tweak with a surprisingly big impact on output quality.

2. Research on Model Scaling Laws

Studies confirm that bigger models generally perform better, but only up to a point.

Recent research shows that smarter training data matters just as much as model size. Throwing more parameters at a problem doesn’t always help.

The focus is now shifting toward training efficiency rather than just building larger models.

3. Findings on Hallucination Reduction

One of the biggest problems with LLMs is that they sometimes make things up.

Recent studies are tackling this head-on. Researchers are testing new methods like retrieval-augmented generation to ground model responses in real data.

Early results look promising, and hallucination rates are dropping with each new approach being tested.

4. Studies on Few-Shot & Zero-Shot Learning

New research shows LLMs can perform well on tasks they’ve never been trained on directly. Few-shot learning uses just a handful of examples to guide the model. Zero-shot learning uses none at all.

Studies show that well-structured prompts can get strong results even without task-specific fine-tuning, which opens up a lot of practical possibilities

Key Trends in LLM Development

LLM development is shifting in some clear directions. These trends are shaping what the next generation of AI models will look like.

- Smaller, More Efficient Models: Developers are building compact models that punch above their weight. High performance is no longer reserved for massive, resource-heavy systems.

- Open-Source LLM Growth: More powerful open-source models are entering the space. This is giving developers and researchers greater freedom to build and experiment.

- Fine-Tuning for Specific Industries: General-purpose models are being adapted for fields like healthcare and law. Targeted fine-tuning is making LLMs far more useful in specialized settings.

- Retrieval-Augmented Generation (RAG): Models are being paired with external knowledge sources. This helps them give more accurate, up-to-date answers without retraining from scratch.

- Agent-Based AI Systems: LLMs are being used as the backbone of autonomous AI agents. These agents can plan, take actions, and complete multi-step tasks on their own.

- Focus on Energy Efficiency: Training large models consumes a lot of power. Researchers are now actively working on greener, more sustainable ways to build and run LLMs.

Top Companies Leading LLM Innovation

A few big names are driving most of the progress in LLM development. This is a look at who’s leading the charge.

1. OpenAI: OpenAI continues to push boundaries with its GPT model series. Their research on reasoning and alignment remains among the most closely watched in the field.

2. Google DeepMind: Google DeepMind is combining world-class research with large-scale infrastructure. Their Gemini model series is giving other frontier models a serious run for their money.

3. Anthropic: Anthropic is heavily focused on building safer and more reliable AI systems. Their Claude model series reflects a strong commitment to responsible LLM development and research.

4. Meta AI: Meta is making waves with its open-source LLaMA model series. Their decision to release models publicly has had a huge impact on the broader research community.

5. Mistral AI: Mistral is proving that smaller teams can still build highly competitive models. Their lightweight yet powerful models have earned a strong reputation among developers worldwide.

6. Microsoft Research: Microsoft is deeply invested in LLM research through its partnership with OpenAI. Their work on integrating LLMs into real-world products is setting new industry standards.

7. Cohere: Cohere focuses on building LLMs designed specifically for enterprise use cases. Their models are built with business reliability, data security, and practical deployment firmly in mind.

Real-World Applications of Latest LLM Research

LLM research isn’t just happening in labs. It’s making its way into everyday tools and industries at a pretty remarkable pace.

Healthcare is one of the biggest areas seeing real change. Models are helping doctors sift through patient records, flag potential diagnoses, and even assist with medical documentation.

In the legal field, LLMs are being used to review contracts, summarize case files, and speed up research that used to take hours.

Customer service has changed a lot, too. AI-powered assistants are handling complex queries with much greater accuracy than before. In education, LLMs are being used to build personal learning tools that adapt to each student’s needs.

The research is clearly moving beyond theory. It’s showing up in products and workflows that real people use every day.

Challenges & Limitations in Current LLMs

LLMs have come a long way, but they’re far from perfect. Some serious challenges still stand in the way of progress.

- Hallucination Problems: LLMs sometimes generate confident but completely wrong information. This remains one of the hardest problems researchers are actively trying to fix right now.

- High Computational Costs: Training and running large models require substantial computing power. This makes cutting-edge LLMs expensive and inaccessible for many smaller teams and organizations.

- Bias in Training Data: Models learn from human-generated data, which carries its own biases. This can lead to outputs that reflect unfair or skewed perspectives in subtle ways.

- Lack of True Reasoning: LLMs are good at pattern matching but struggle with genuine logical reasoning. They can appear smart while still missing the problem entirely.

- Context Window Limitations: Even with improvements, models still lose track of details in very long conversations. Maintaining accuracy over extended interactions remains a real and ongoing technical challenge.

- Data Privacy Concerns: LLMs trained on large datasets can sometimes expose sensitive information. This raises serious questions about how training data is collected, stored, and used responsibly.

- Vulnerability to Prompt Injection: Bad actors can craft inputs that manipulate model behavior in harmful ways. This poses a significant security risk, especially in production and enterprise environments.

Future of LLM Research: What’s Next

The future of LLM research looks both exciting and complex. Researchers are pushing in several new directions at once, and the pace isn’t slowing down anytime soon.

One big area of focus is making models more reliable and grounded in facts. Hallucination reduction is high on every research team’s priority list.

Another direction gaining serious traction is improving LLMs’ long-term memory. Right now, models forget context between sessions. That’s something researchers are actively working to change.

There’s also growing interest in building models that can reason more like humans. Not just predicting the next word, but actually thinking through problems step by step.

Energy efficiency is another frontier. As models grow more capable, keeping their environmental footprint in check is becoming just as important as raw performance.

To Conclude

LLM research is moving in a clear direction toward smarter, safer, and more useful models in the real world. The breakthroughs happening right now are laying the ground for what AI will look like in the next few years.

The gap between those who understand these changes and those who don’t is only going to grow wider.

Got thoughts on which LLM development stands out the most?

Drop them in the comments below. And if this was helpful, share it with someone who’s trying to keep up with the AI space.